ContactHands

A large-scale dataset of in-the-wild images for hand detection and contact recognition.

Supreeth Narasimhaswamy1, Trung Nguyen2, Minh Hoai1,2

1Stony Brook University, Stony Brook, NY 11790, USA

2VinAI Research, Hanoi, Vietnam

This work is based on Detecting Hands and Recognizing Physical Contact in the Wild (NeurIPS 2020). We collect a large-scale dataset of unconstrained images and annotate hand locations and their contact states.

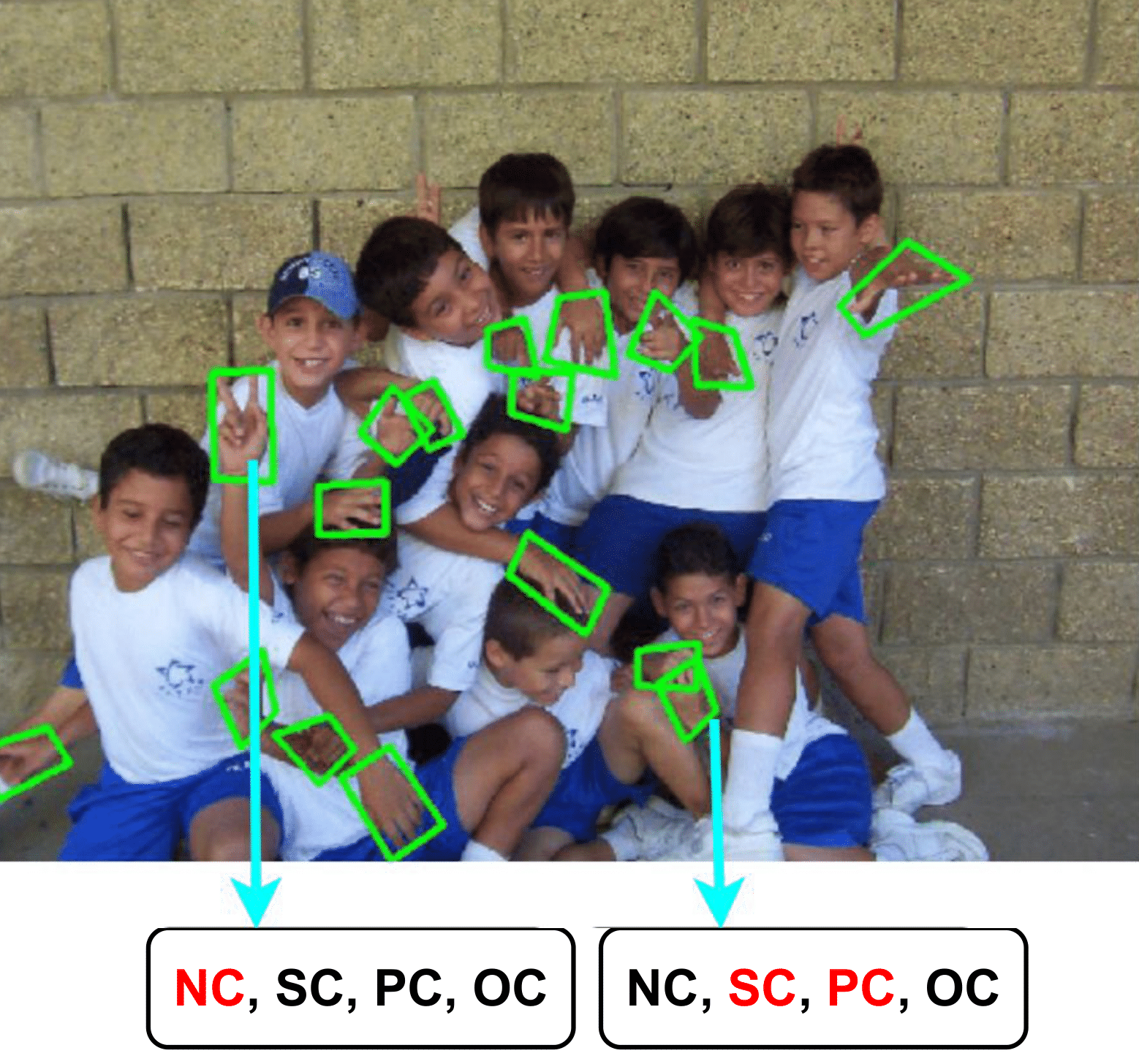

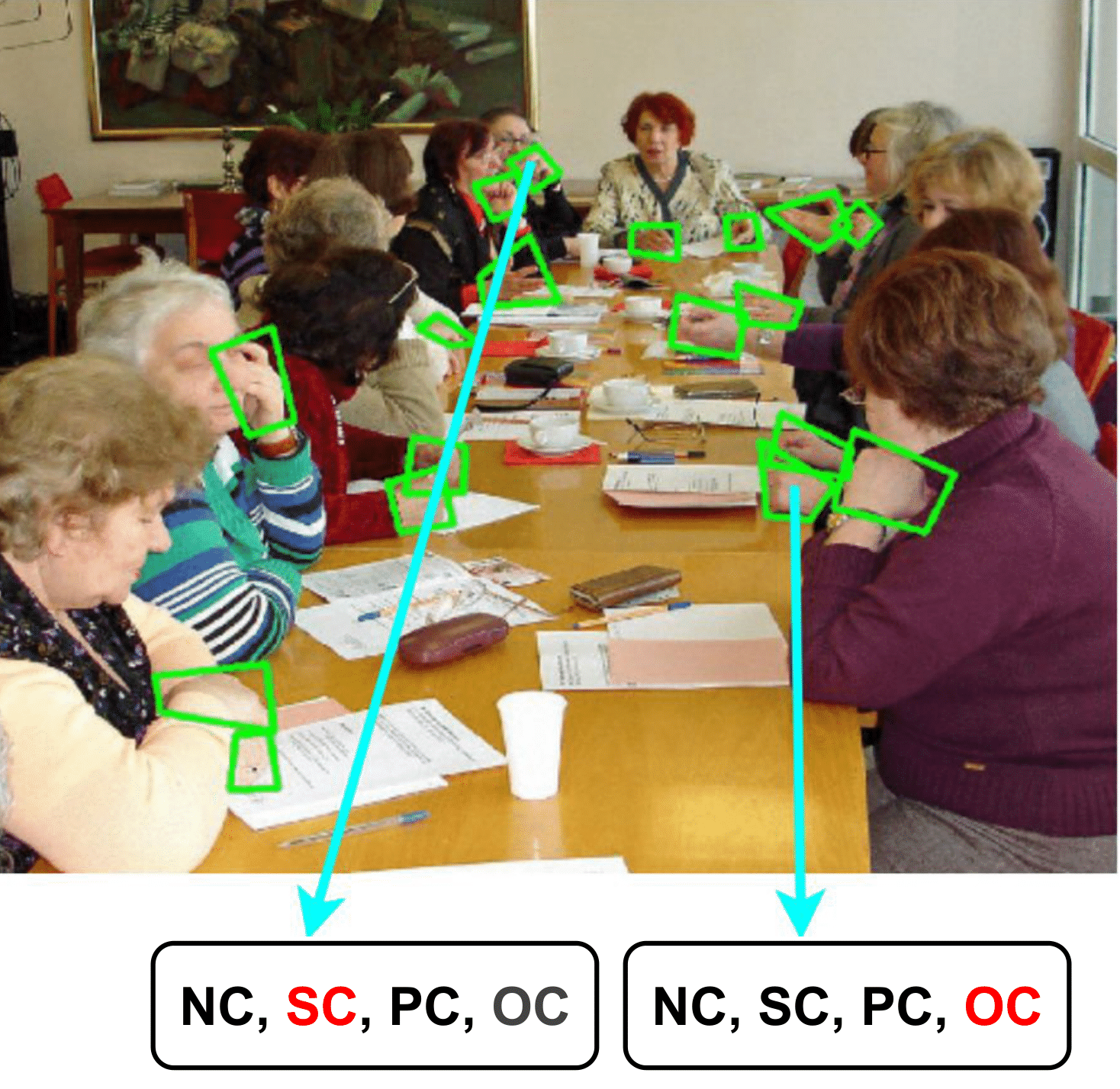

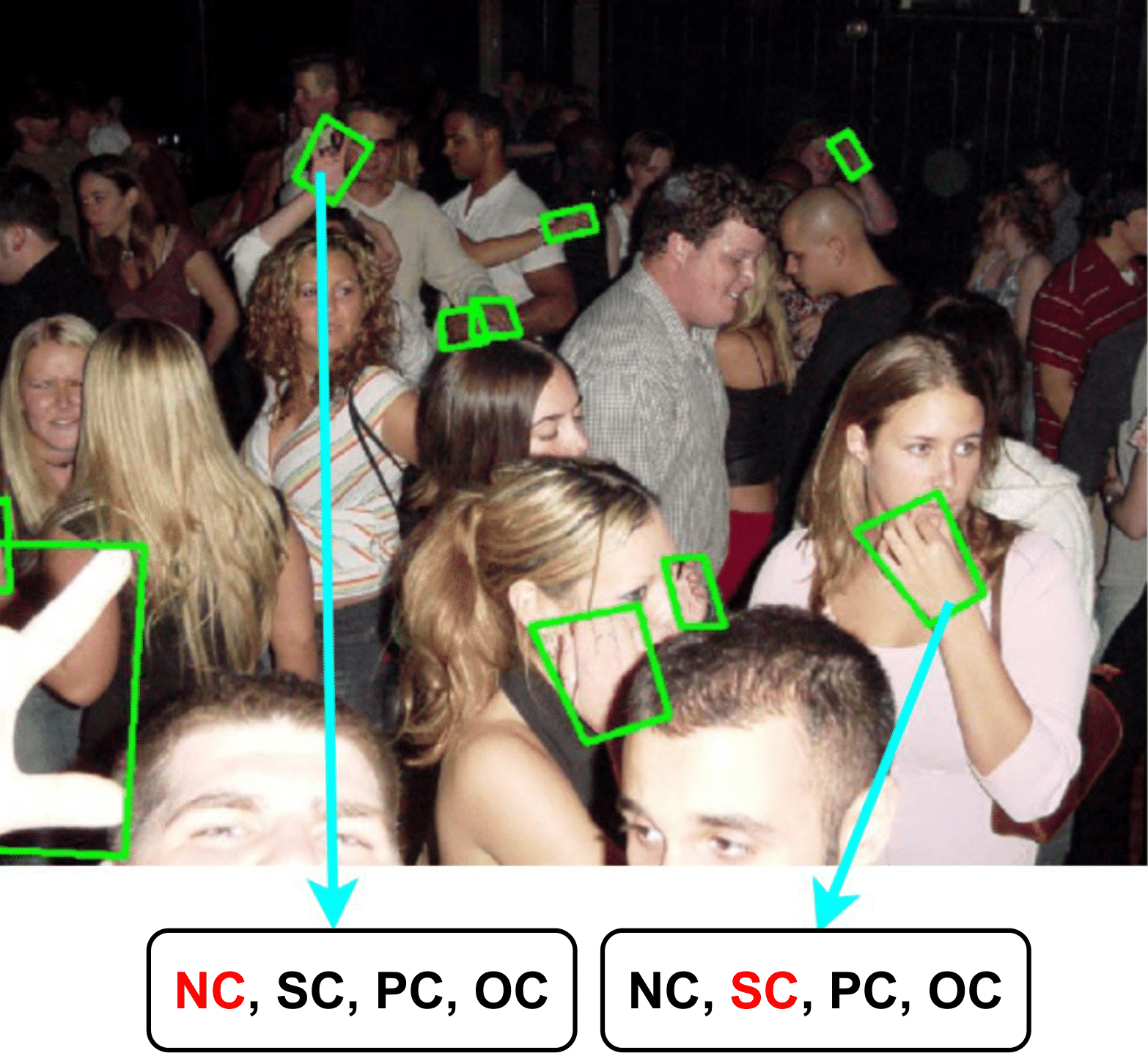

We annotate hands by quadrilateral bounding boxes and provide contact state annotations by recognizing the following four conditions, namely: (1) No-Contact: the hand is not in contact with any object in the scene; (2) Self-Contact: the hand is in contact with another body part of the same person; (3) Other-Person-Contact: the hand is in contact with another person; and (4) Object-Contact: the hand is holding or touching an object other than people. These conditions are not mutually exclusive, and a hand can be in multiple states. For each hand instance, we annotate the four contact states by answering Yes, No, or Unsure.

Our dataset has annotations for 20,516 images, of which 18,877 form the training set and 1,629 form the test set.

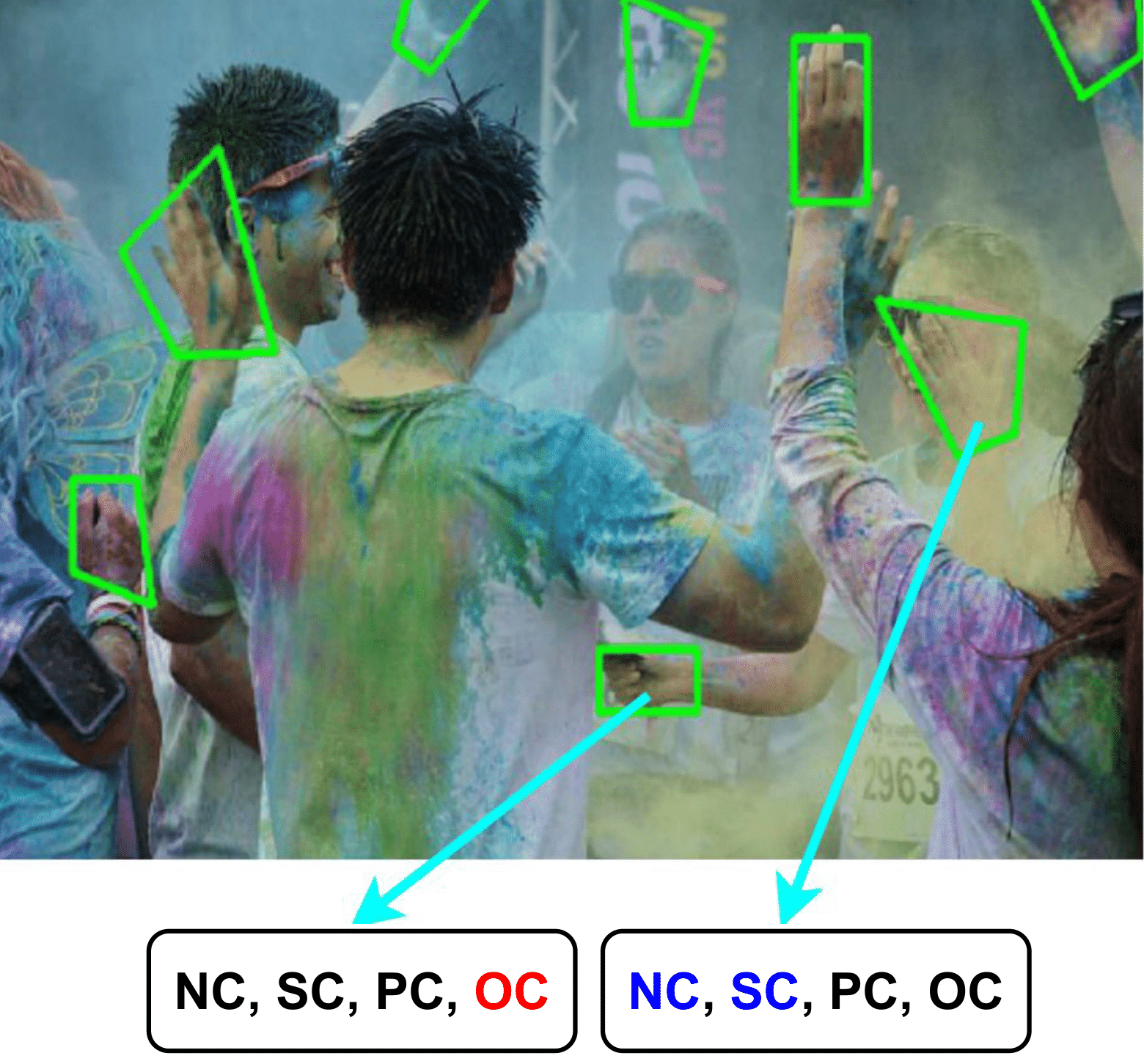

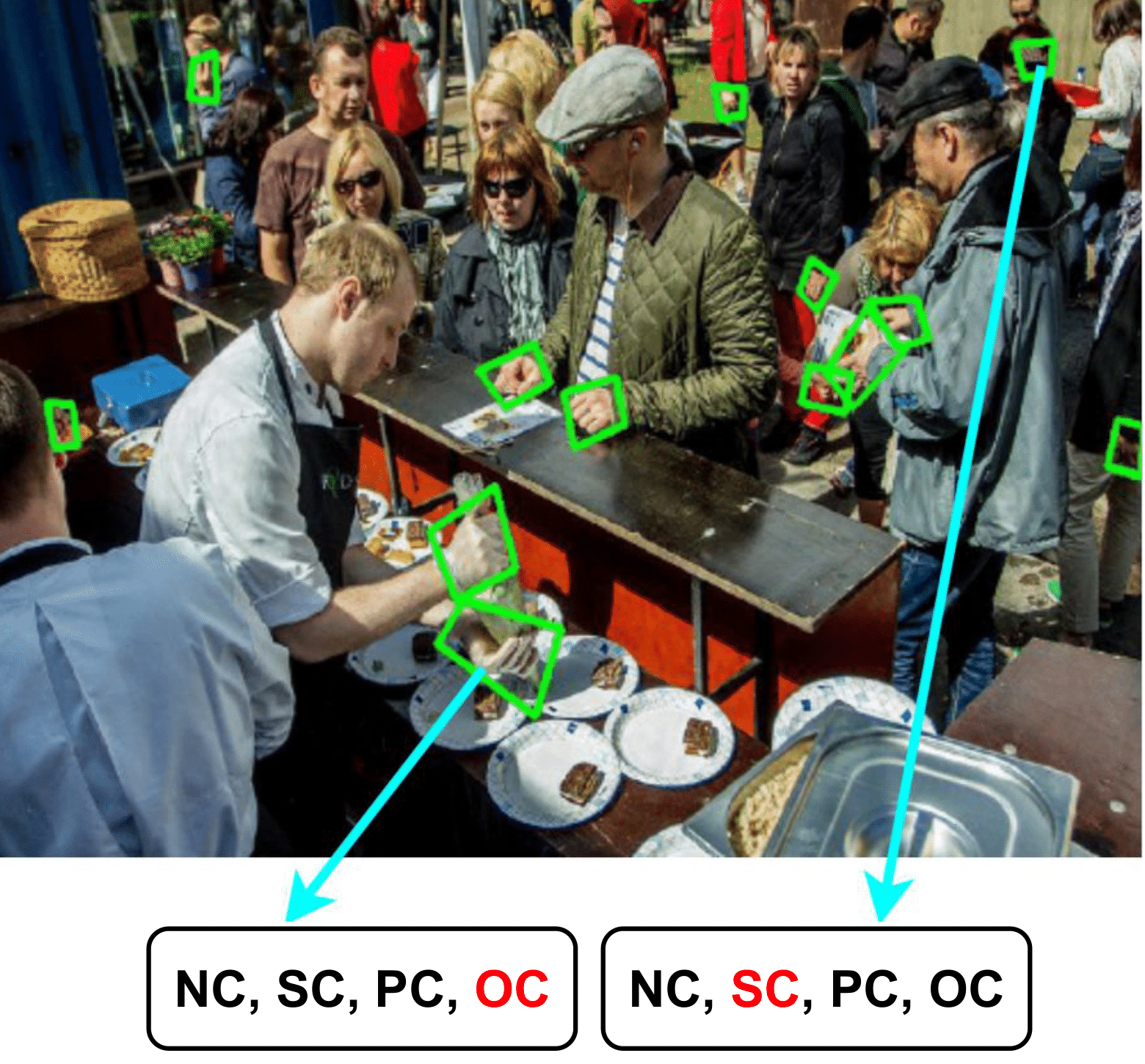

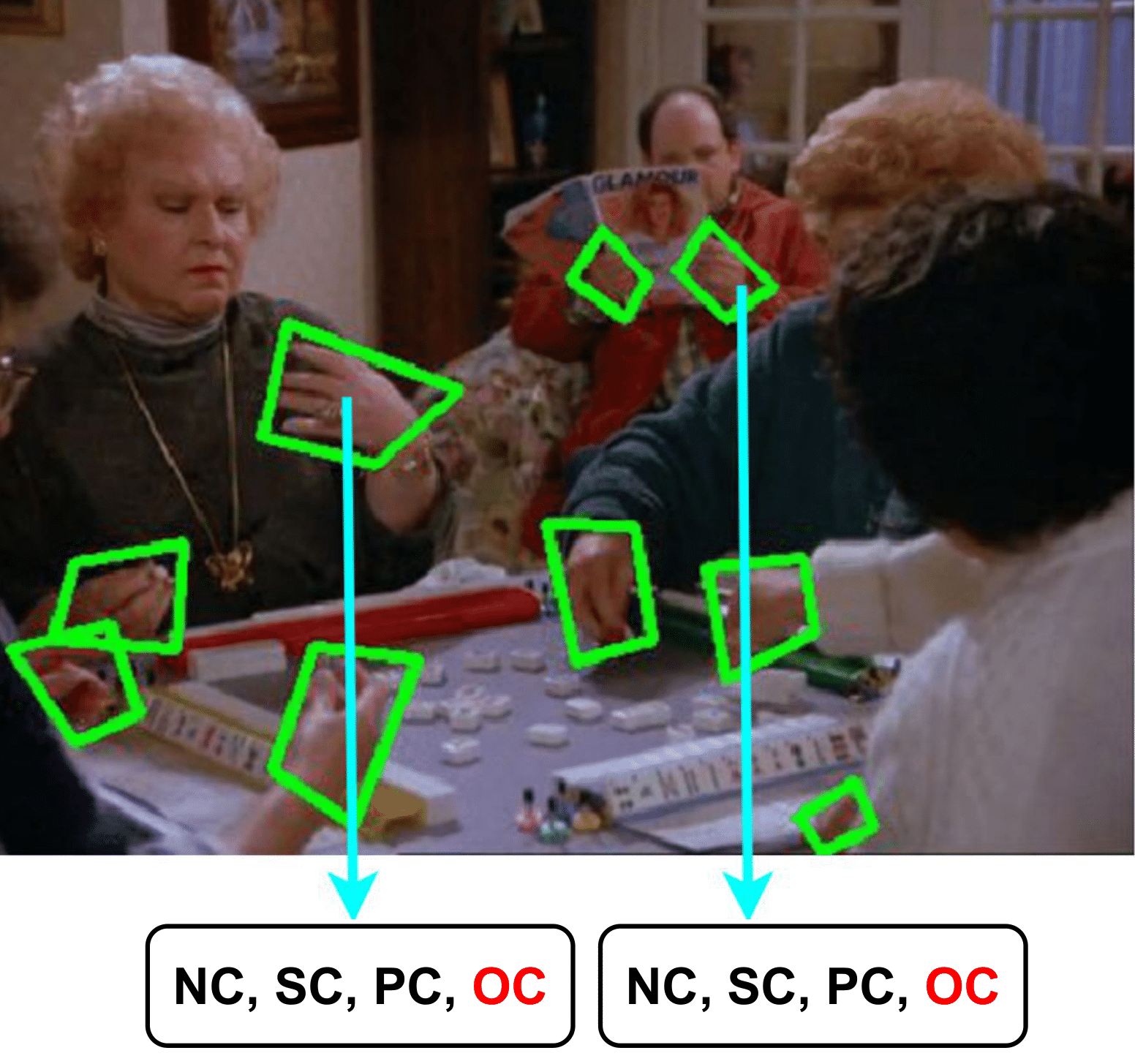

Examples

Sample data from ContactHands. We show the bounding box annotations in green color. To avoid clutter, we display contact states for only two hand instances per image. The notations NC, SC, PC, and OC denote No-Contact, Self-Contact, Other-Person-Contact, and Object-Contact. We highlight the contact state for a hand by red color. If a contact state is unsure, we highlight it in blue.

Citation

If you find our work useful, please consider citing:

@inproceedings{contacthands_2020,

title={Detecting Hands and Recognizing Physical Contact in the Wild},

author={Supreeth Narasimhaswamy and Trung Nguyen and Minh Hoai},

booktitle={Advances in Neural Information Processing Systems},

year={2020},

}

Download

The dataset (4.5G) can be dowloaded from VinAI Server

and Google Drive.

Copyright and Disclaimer

Copyright:

Notwithstanding the publish and/or disclosure of this document, the data, and any material in pertaining to this document (collectively the “Works”), all copyright and all rights therein are maintained by and remained proprietary property of the authors and/or the copyright holders.

Disclaimer:

The Works are provided “AS IS” without warranties or conditions of any kind either expressed or implied. To the fullest extent possible under applicable law, the authors and/or the copyright holders disclaim any and all warranties and conditions, expressed or implied, including but not limited to, implied warranties or conditions of merchantability and fitness for a particular purpose, non-infringement or other violation of rights or breach of contract. The authors and/or the copyright holders of the Works do not warrant nor make any representations of any kind in relation to, or in connection with the use, accuracy, timelines, completeness, efficacy, fitness, applicability, performance, security, availability or reliability of the Works, or the results from the use of the Works. In no event and under no circumstance shall the authors and/or the copyright holders of the Works be liable for any claim, damages or other liability, whether in an action of contract, tort or otherwise, arising out of or in connection with the Works, or the use of the Works.